Billions of agents talking to billions of agents

About six weeks ago I started using OpenClaw and wrote about my initial approach. OpenClaw is a new category of personal AI assistant evolved beyond the chatbot. It’s an open source, self-hosted agent you could say has been doing the numbers. In my last newsletter, I referred to it like this:

I believe it is what Siri was supposed to be, and what the big labs wish they could make.

What the big labs wish they could make? Directionally correct I suppose, but my timeline was off. When I wrote those words on February 12, I assumed we were at least a year away from seeing anything comparable from the big labs. Further out still for enterprise adoption.

Too much risk involved for the corporate world I figured. They’d sleep while we all hack and explore. But sleep isn’t right, it’s more like stalk.

Personal AI assistants will soon be as ubiquitous as smartphones. They are so useful that once they hit mainstream adoption, it will be harder to not have one than to just have one. Just like with smartphones, there will be a tipping point. We’ll have one for home and one for work, and billions of agents will talk to humans and other agents. Sure, things are going to get weird.

Anyway, the same day I published those words I happened to hear goated builder of OpenClaw Peter Steinberger (@steipete) on Lex Fridman discuss how he was talking to and getting offers from Meta and OpenAI. Ok, I thought, they are moving fast on this. Couple days later, our man announces joining OpenAI. He mentioned his “next mission is to build an agent that even my mum can use,” which is honestly a pretty awesome goal. Right now the thing is for hackers, but everyone should have access to this technology.

Having an assistant running 24/7 on my Mac mini, I’ve been impressed by how useful it can be in my life. If you thought chatbots were great, you ain’t seen nothing yet, baby. This technology is early stage, but it’s a step change beyond anything we’ve encountered interacting with LLMs up until now. We’re at a major point in computing history.

I’m not some SaaS bro writing slop code for zero user apps, yea, there is a lot of noise online about that right now. Ugh. I'm more interested in how this technology can learn about us and help us become better family members, think deeper about the problems that matter to us, and be more healthy and efficient human beings.

OpenClaw does this by learning about you over time, and it has a hackable memory system. It doesn’t wait for you to ask. It reaches out proactively, surfacing things you need to know before you think to check. You have to experience this a few times to get why it’s different.

It also occurred to me how wickedly good personal AI assistants could be in a work environment. There are real hurdles like security boundaries and terms of service, but we’re already starting to see some of these problems get solved. They’re worth solving.

First, let’s go over how we got here.

A trip down memory lane

We all had our ChatGPT moment. Do you remember the first time you had a fluent and pretty reasonable conversation with a model? Magic. Actually, math, but yea magic.

Models did make progress, and aside from that we got three things in 2023 that made them considerably more interesting:

Function calling in June

Web search in October

JSON mode in November

The problem with ChatGPT was that it couldn’t “do” anything. Function calling rolled out at that time to fix that. Now we could write pieces of code that the model could decide when to call and execute at the right time with the right parameters. That was the theory anyway, but anyone who remembers working with them at the time knows the models were pretty terrible with them.

Another limitation skeptics of LLMs were quick to point out was the training cutoff date was a bigger deal than it is today. Models take a while to pre-train and go through post-training before they are released, so people loved to dunk on them for “so dumb not knowing” stuff that happened in the last few months.

Side note, I wouldn’t recommend dunking on LLMs too much. It comes across weirdly insecure, and if you give them six months you’ll be the one feeling dumb. We seem to blow through one barrier after another. There is nothing stopping progress from here on out, everything is an engineering challenge that will be solved.

Anyway, web search filled that gap, but didn’t get decently good in my opinion until o3 released in late 2024. Likewise, JSON mode was announced at OpenAI’s first DevDay, which I attended in November 2023. It was essentially a predecessor to structured outputs, which didn’t come out until August 2024.

Structured outputs is what we use today. So much better than JSON mode because now I can not only ask for a guaranteed parsable JSON response but one which conforms to a specific schema which I also define. Function calling likewise had to wait until better models came along to be useful, probably only starting in 2024 as well.

Models are only as good as the context provided to them

Over the past few years, a handful of phrases have really stuck in my head:

“Infinitely stable dictatorships,” a phrase Ilya Sutskever ominously warned about in a doomer moment in the 2019 iHuman documentary.

“Sparks of AGI” from the title of a Microsoft research paper published in 2023.

“Machines of loving grace,” Dario Amodei’s essay you should definitely read, which borrows its title from Richard Brautigan’s 1967 poem.

“Models are only as good as the context provided to them.”

The latter perhaps not exactly poetry, but one I’ve often heard repeated and for good reason. As far as I can tell, it was first stated by Mahesh Murag (@MaheshMurag) at the AI Engineer Summit in New York, 2025. At any rate, it's a simple truth I keep coming back to. In my own AI engineering experience the past few years, the models get stronger, but you need the right context or they’re useless.

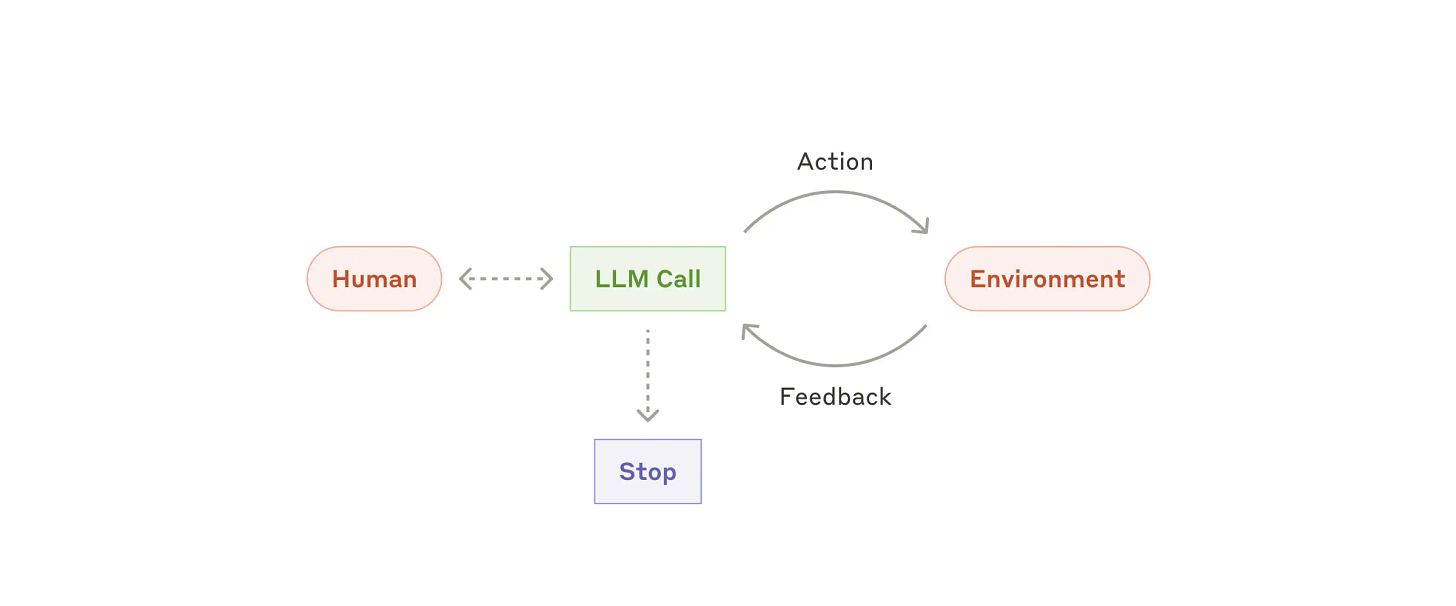

I'll get back to that thought in a moment, but there is one other piece of this puzzle we need to fill in: agents. “Agents are models using tools in a loop,” a pithy definition from Hannah Moran, and one I still favor.

And trust me, trying to define what the heck even is an agent was a thing for sure. There was plenty of talk about environment that struck me as weirdly pedantic. I think folks were trying to abstractly account for things like physical robots we don't even have yet. Meanwhile, environment turned out to be… bash. An agent can ostensibly do anything a human can do on a computer via the command line (bash) and, increasingly, via screen-based interaction (computer use).

When Claude Code was released in early 2025 in tandem with Claude 3.7 Sonnet it was no doubt a watershed moment. The model was meh but improving. A lot of us were using Claude 3.5 Sonnet already to write actual production code, but it was more of a “let’s see if we can do this” or “hey wouldn’t it be fun if I just had the LLM write this for me,” and a whole lot of copy pasta between the chatbot and your IDE that no sane human being should have to do.

I’m sure we could have just written the code ourselves in less time during that period, but what is the fun in that? I was ready to automate my job away. Anyone else who tried to use chatbots to write serious code can tell you, the model really needs context of your codebase, and it ideally needs that iterative feedback loop from linters, compilers, and testing. Claude Code automated this loop.

On the one hand, if you had spent time toiling away back and forth with the chatbot then you’d have gotten pretty good at hand rolling some artistically packaged context blocks, refining as you go. These are essentially the actions Claude Code automates for you, but if you’d done so in this period, you look at today’s agentic output differently.

All I see is system prompt, user message, tool call, parameters, return, and so on. When I’m having trouble building agents or working with agents I go back to this simple thing: look at what is being sent to the model and what is coming back. With open source models, that extends to the inference server logs and, on rare occasions, even reading the server’s source code to make sure you truly understand what’s going on.

It’s not like I’m looking at the raw request of every agentic turn, but when there is trouble this is exactly where I go. This is a bit of a superpower by the way. Keep that in your back pocket.

I thought you were gonna talk about AI assistants

The genius of OpenClaw is how it’s layered. Start with an agent not unlike Claude Code built on the Pi framework in this case. It scales through bash, CLIs, and agent skills, giving it practically unlimited access to your personal context. Your messages, email, calendar, notes, health data, whatever you wire up.

Layer a persistent memory system on top of that, and now it knows you and evolves. Finally, it reaches out to you and you to it through whatever messaging apps you already use. This is what makes it different from a chatbot. A chatbot answers questions. This thing lives on your machine, accumulates context over time, and acts on your behalf.

Big tech is opening the door

Sure, you can integrate most of these data sources into ChatGPT or Claude already. But this hits different. With OpenClaw, it’s my machine, running on my home network. When it browses the web, it does so from a residential IP address. I text it through my normal messaging app, iMessages. It’s not a product someone else controls. It’s mine.

As far as I can tell, the platforms are starting to meet us halfway. Peter Steinberger built gogcli to integrate Google products early on with plenty of you can just do things energy. But Google themselves recently released a Workspace CLI built for humans and AI agents. That’s a pretty significant signal. This reads to me as a direct response to the new class of personal assistants that OpenClaw opened the door to.

It appears Microsoft isn’t far behind. A few days ago Brad Groux (@BradGroux) posted on X that more than a dozen Microsoft employees are involved in getting OpenClaw working on Teams with six dedicated to the effort. They’re dogfooding it internally. Microsoft is actively making Teams available to this class of AI assistant.

Clearly, the platforms see where this is going and they’re laying the rails. All of OpenClaw is built without first-party support. Now imagine what happens with it.

Build over buy

On the enterprise side, we’re seeing a similar signal from a different angle. Stripe, Ramp, and Coinbase have all built their own internal coding agents rather than buying off the shelf. Different approaches, Stripe forked and open source agent, Ramp composed on top of one, and Coinbase built from scratch. But I feel like the same underlying conclusion here is nobody understands your business like you do.

In short, all signs point to build right now. The platform vendors are shipping first-party CLIs and the best engineering organizations are building their own agents in-house. The writing is on the wall.

Get started now

If you’re reading this newsletter, my advice hasn’t changed: get started. Set it up. Dogfood it.

There will be polished personal AI assistants ready for mainstream use eventually. Right now while it’s still rough is the best time to learn. Same energy as hand-rolling context blocks for chatbots. It was painful, the models weren’t quite there either, but the people who did it came out the other side understanding how these systems actually work.

I believe that understanding compounds. When the safe version arrives, you won’t be learning. You’ll be building.