The most dangerous command in computing

Howdy folks, things are moving fast this week. I’ve got a grab bag for you and some thoughts. Some of this is exciting, some of it is terrifying, and one of them made somebody’s Mac melt down.

Let’s get into it.

Will PRs get unalived?

The mighty pull request, also known as merge request if you speak GitLab, is the atom unit of collaborative software development.

Lately, plenty of folks are coming for them in 2026:

Eleanor Berger (@intellectronica) called this last year already:

Are PRs cooked? Man, in a way I’m getting low key nostalgic already for their loss and they haven’t even gone yet, but the truth is though things have to change. We’re in a transition period of historical proportions, so a lot of things will be quite different on the other side. Now is the time to start rethinking everything.

I’ve been watching this play out on our team. We went from a world where a PR represented hours of focused human work, and the review was a meaningful checkpoint, to a world where the agent generates the PR and another agent reviews it. The human is no longer on either side of that loop by default. We’re approvers now, not authors or reviewers.

The interesting question to me isn’t whether PRs die, but what replaces the trust mechanism they provided. PRs were never really about the code diff. They were about one human saying to another: I looked at this, and I think it’s right. When both the author and the reviewer are agents, that social contract is gone entirely. The human’s role shifts from doing the work to deciding whether the work should be done at all.

Meanwhile, Peter Steinberger (@steipete) has a different and complementary take on what’s happening to PRs in open source. He posted on X:

PRs as prompt requests. That’s a good reframe. The value isn’t the code anymore, it’s more about the problem identification. The maintainer takes the problem statement, rewrites the solution with their own agent, credits the original reporter. The back-and-forth review cycle collapses into a single step. I think this is where open source PRs are heading fast.

Codex meets OpenClaw

Speaking of agents doing the work, here’s something cool: Codex App Server Bridge just landed as a community plugin for OpenClaw.

What this does is let you bind a Telegram or Discord conversation to a Codex thread. You text it, it routes to Codex. You can resume threads, switch models, review diffs, trigger planning mode, all from a chat interface. One command to bind, then just talk.

Why does this matter? Because it collapses two things that have been separate: your personal assistant and your coding agent. Right now most of us context switch between them. You ask your assistant something, then you switch to Claude Code or Codex to actually build it, then you come back to the assistant to figure out what to do next. This plugin means your assistant can route coding work to Codex without you leaving the conversation.

This is the direction everything is heading. Not one mega agent that does it all, but a personal agent that orchestrates specialized agents on your behalf. Your assistant becomes the router, not the executor. OpenClaw already supports this with sub-agents and sessions, but having much improved Codex integration is a signal. The ecosystem is filling in the gaps faster than any single project could.

Google’s TurboQuant: cheaper inference is coming

Google Research dropped TurboQuant this week. It’s a compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup with zero accuracy loss.

I’ll spare you the full paper summary, but the key insight is worth understanding. The KV cache is the memory bottleneck for long-context inference. Every token the model has seen gets cached so it doesn’t have to recompute attention from scratch. As context windows get longer, that cache gets expensive fast.

TurboQuant compresses this cache down to 3 bits per value without any fine-tuning or accuracy loss. It does this through a clever two-stage approach: first a rotation-based quantization step (PolarQuant) that simplifies the geometry of the data, then a 1-bit error correction pass (QJL) that eliminates the bias introduced by compression.

Inference cost is often the bottleneck for agent adoption. If you’re running an agent 24/7 that’s reading your email, monitoring your calendar, processing documents, you’re burning tokens constantly. Anything that makes inference cheaper and faster directly enables more people to run agents. A 6x reduction in KV cache memory means you can serve longer contexts on the same hardware, or serve the same contexts on cheaper hardware.

This won’t show up in your API bill tomorrow. But when Google deploys this across their fleet, and when it inevitably gets adopted by other providers, the cost curve for always-on agents gets a lot more favorable. The future where everyone has a personal agent is partly a cost problem, and research like this chips away at it.

Cloudflare Dynamic Workers: sandboxing, 100x faster

Cloudflare announced Dynamic Workers yesterday, and this one is significant for anyone thinking about agent security.

The problem: when an agent writes and executes code, that code needs to run somewhere safe. The current answer is mostly containers. Containers work, but they’re slow to start (hundreds of milliseconds), expensive in memory (hundreds of megabytes), and hard to scale when every user interaction might need its own sandbox.

Dynamic Workers use V8 isolates instead. A few milliseconds to start, a few megabytes of memory. That’s 100x faster and 10-100x more memory efficient than containers. You can spin up a new isolate for every single request and throw it away afterward without flinching.

Here’s the part I found most interesting. They’re pushing TypeScript as the interface language for agent-to-API communication, replacing OpenAPI specs. Their example is a TypeScript interface describing a chat room API is a handful of lines. The equivalent OpenAPI spec is so long you have to scroll. Fewer tokens to describe the API means cheaper inference and better agent comprehension.

The globalOutbound option is also pretty clever. You can intercept every HTTP request the sandboxed code makes, inject credentials, block unauthorized calls, or rewrite requests. The agent never sees the actual secrets. This is credential injection done right: the agent has the capability without having the keys.

This matters because sandboxing is one of the main unsolved problems for production agents. OpenShell (which just got added to mainline OpenClaw this week, by the way) takes a Linux-native approach with Landlock/seccomp/netns. Cloudflare is taking a V8 isolate approach. Different layers, same problem. Let agents execute code without giving them the keys to the kingdom.

Competition here is good. The more people working on agent sandboxing, the faster we all get to a world where running an agent doesn’t require a tin foil hat. I still wear mine, for the record, but I’d like the option not to.

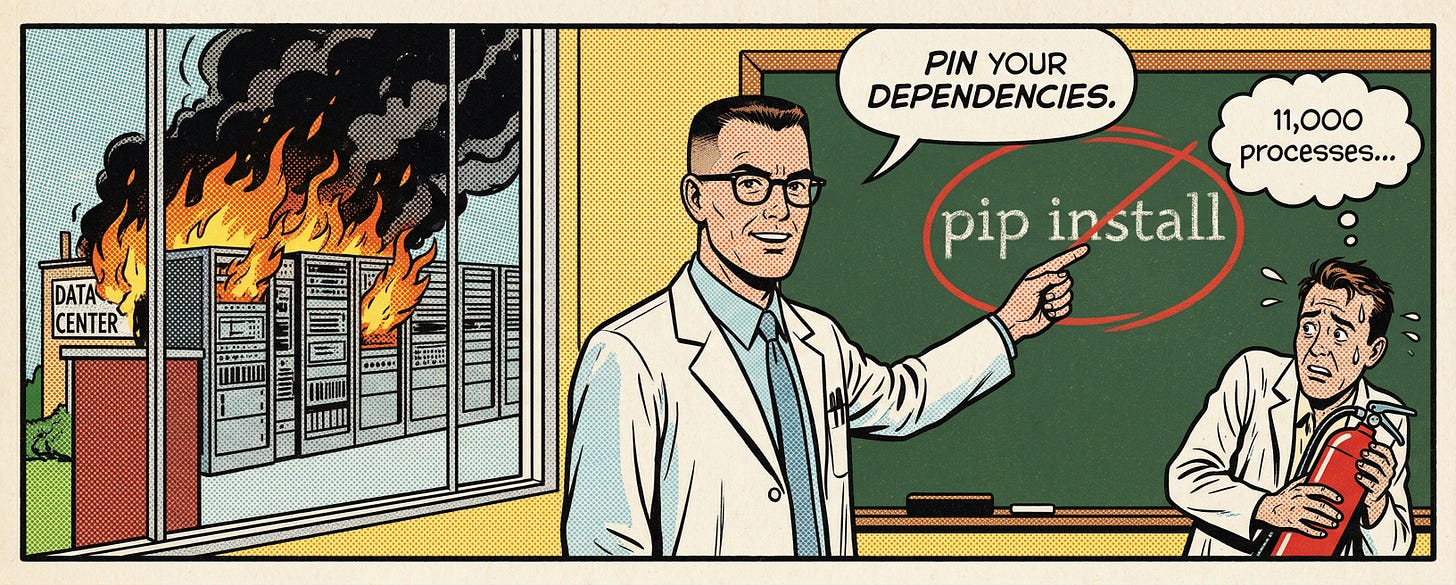

LiteLLM got compromised (today)

And now for the terrifying one. LiteLLM version 1.82.7 and 1.82.8 was compromised on PyPI today. If you use LiteLLM, stop what you’re doing and check your version right now. If you installed or upgraded on or after March 24, 2026, you may be affected.

What happened: somebody uploaded a malicious version directly to PyPI, bypassing the normal GitHub release process. The payload harvests SSH keys, cloud credentials, .env files, database passwords, crypto wallets, and basically anything sensitive on your machine. It encrypts the haul with a hardcoded RSA key and ships it off to a domain masquerading as LiteLLM infrastructure. If it finds a Kubernetes service account, it tries to read every secret in the cluster and install persistent backdoors across all nodes.

The discovery story is almost darkly comic. Callum McMahon at FutureSearch wrote the explainer. His machine stuttered to a halt, CPU pegged at 100%, 11,000 processes running. The malware had a bug: it used a .pth file that triggers on every Python interpreter startup, so the malicious subprocess would itself trigger the .pth file, which would spawn another subprocess, which would trigger it again. Fork bomb. The malware’s own poor quality is what made it visible.

As Andrej Karpathy pointed out on X, without that bug it would have gone unnoticed for much longer. A competent attacker would have had a clean exfiltration with nobody the wiser.

Here’s what I find most instructive about this. We’ve spent a year talking about prompt injection as the existential threat to AI agents. Simon Willison (@simonw) has been hammering on the lethal trifecta. Agents with access to private data, exposure to untrusted content, and the ability to take action. And he’s right, that is a real problem.

But what actually got people this week? A supply chain attack. pip install with an unpinned dependency. No prompt injection required. The MCP client (Cursor, in this case) auto-downloaded the latest version via uvx, which pulled in the compromised LiteLLM, which ran the malware before any model was even involved.

Securing agents is not just about securing the AI part. It’s about securing the entire stack they run on, the same boring supply chain hygiene we’ve been preaching for years. Pin your dependencies. Use lock files with checksums. Audit before upgrading. The agent era doesn’t change any of this. It just raises the stakes because your agent has access to everything.

And if you’re running MCP servers locally, think hard about whether they need to be local. FutureSearch moved to a remote MCP architecture after this. The server doesn’t run on the user’s machine anymore, which collapses the entire attack surface. That’s worth considering.

Wrapping up

A lot happened this week. We’re rethinking everything as we should, inference is getting cheaper, sandboxing is getting faster, and somebody learned the hard way that pip install is still the most dangerous command in computing.

The infrastructure is catching up to the ambition. That's the pattern I keep seeing. The models were ahead of the tooling six months ago. Now the tooling is closing the gap. Sandboxing, compression, agent wiring, all of it maturing in parallel.

Just pin your dependencies.