Capybara got that big model smell

Before we get into it, my first PR landed in OpenClaw today. I run a two-machine setup where I text my agent over iMessage through BlueBubbles, and the agent reads my message history via imsgkit, a small library I wrote.

I kept hitting probe errors where the private-network handling was scattered across different code paths that disagreed on whether my localhost server was trusted. So I fixed it.

What I love about open source is you get to use a product you love, and then actually have a say in how it gets fixed. You become a small part of the narrative in shaping how it moves. That’s pretty cool.

Release the capybara

Two weeks ago, somebody at Anthropic forgot to flip a switch.

Their content management system defaults assets to public. If you don’t explicitly mark something private, it gets a publicly accessible URL. So when they uploaded a draft blog post announcing their most powerful model ever built, it just kinda sat there indexed and searchable. Fortune found it.

The draft described Claude Mythos, internally codenamed Capybara, a new tier above Opus. Anthropic called it a “step change” and “the most capable we’ve built to date.” The blog warned that the model “poses unprecedented cybersecurity risks” and is “currently far ahead of any other AI model in cyber capabilities.” Close to 3,000 unpublished assets were exposed, including details about an invite-only CEO retreat at an 18th-century English manor. Anthropic blamed “human error.”

Then, days later, they accidentally leaked Claude Code’s source code. 1,900 files. 512,000 lines. Two security slipups in one week.

Keep that in your head. We’ll come back to it.

Today, Anthropic officially announced Project Glasswing. Claude Mythos Preview is real though it's not yet for public release. The coalition behind it looks like someone sent a group text to every CISO on earth: AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks. Anthropic is committing $100M in usage credits and $4M in direct donations to open-source security organizations.

The reason they’re not releasing it publicly is also the reason they built the coalition. This model can find and exploit zero-day vulnerabilities in every major operating system and every major web browser autonomously and without being trained to do so.

Here’s what Mythos Preview has done in a few weeks of testing:

Found a 27-year-old vulnerability in OpenBSD, one of the most security-hardened operating systems in the world, that lets an attacker crash any server just by connecting to it

Chained together four separate vulnerabilities into a browser exploit with a JIT heap spray that escaped both the renderer and OS sandboxes

Achieved root access on Linux by exploiting subtle race conditions, autonomously

Found thousands of zero-days across every major platform, over 99% of which haven’t been patched yet

Non-security-trained engineers at Anthropic asked Mythos to find remote code execution vulnerabilities before going to bed. They woke up to working exploits.

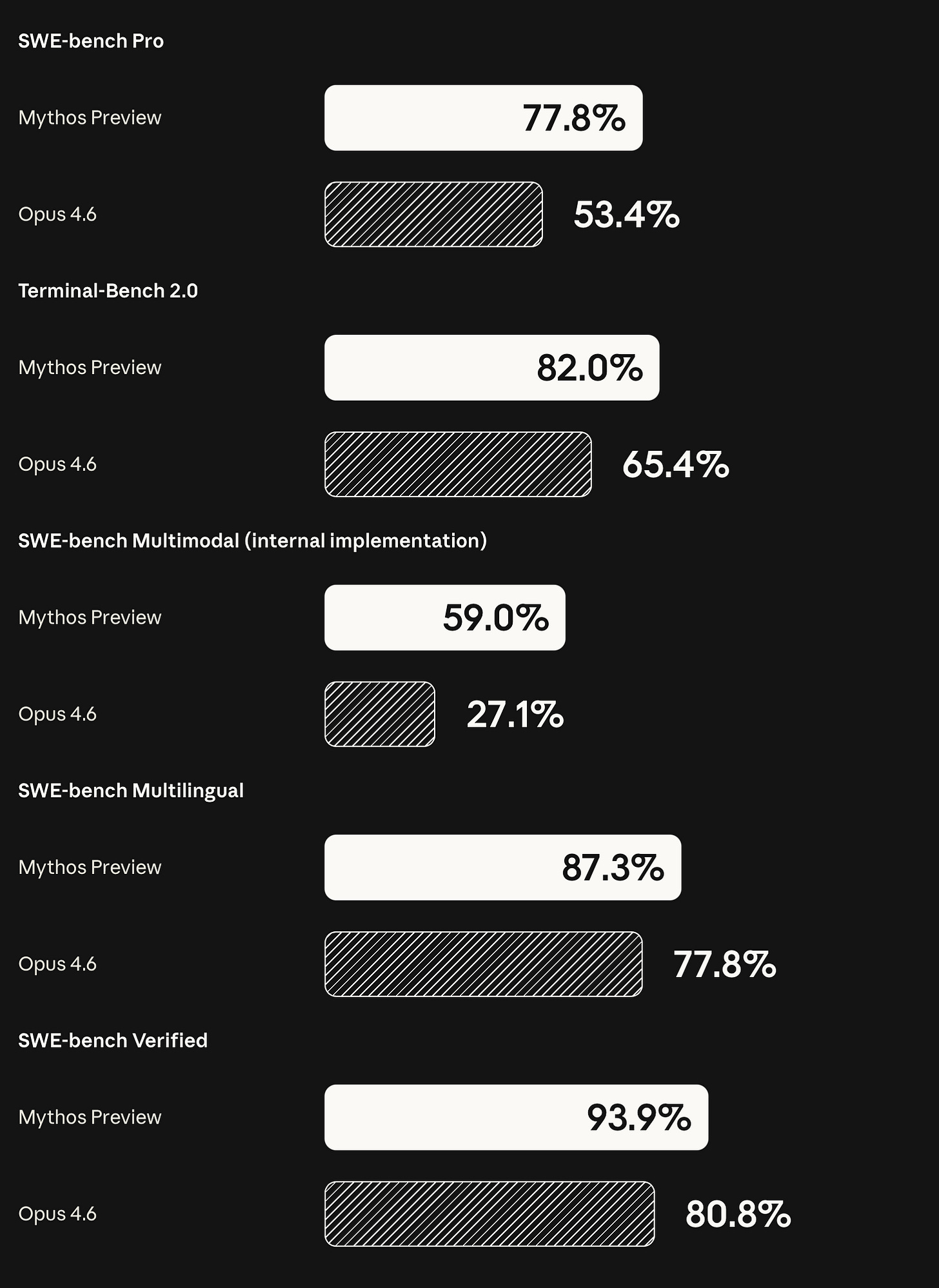

I know, I know nobody cares about benchmarks, but think you’ll want to sit down for these:

On exploit development specifically, Opus 4.6 had a near-zero success rate working autonomously. Mythos Preview turned Firefox JavaScript engine vulnerabilities into working exploits 181 times where Opus managed 2.

Capybara got big model smell and will be a step change in intelligence. Note that Anthropic didn’t train Mythos for security. Dario Amodei: “We haven’t trained it specifically to be good at cyber. We trained it to be good at code, but as a side effect of being good at code it’s also good at cyber.”

The security capabilities emerged from general improvements in code reasoning and autonomy. The same thing that makes it better at patching makes it better at exploiting.

Nicholas Carlini, one of Anthropic’s research scientists, put it simply: “I found more bugs in the last couple of weeks than I found in the rest of my life combined.”

Slopping the slop

Now let’s talk about FFmpeg. Last Halloween, you might remember the “CVE slop” incident. Google’s AI found a security bug buried in FFmpeg’s decoder for a 1995 video game. The bug was real, but FFmpeg’s volunteer maintainers were furious. They patched it and coined the term “CVE slop.” In other words, vulnerability reports generated by trillion-dollar companies’ AI tools and dumped on unpaid volunteers to fix. Their message to Google was blunt: fund us or stop sending bugs.

That was five months ago. Today:

The difference? Anthropic didn’t just find the bugs. Mythos found a 16-year-old vulnerability in FFmpeg in a line of code that automated testing tools had hit five million times without ever catching it and sent the fix.

That’s the difference between AI as a burden on open source and AI as a contributor to it. Reports versus patches. Read the room, Google.

Security is hard for everyone, including the people building the tools to make it less hard. And if Anthropic can ship a model that autonomously chains four browser vulnerabilities into a sandbox escape but can’t keep a draft blog post private, imagine how the rest of us are doing.

The good news: the defenders just got a serious weapon. The bad news: the clock is now ticking for everyone else to catch up before models like this proliferate. Dario: “More powerful models are going to come from us and from others. And so we do need a plan to respond to this.”

Dry bread country

I love Anthropic. Their approach to safety is the most thoughtful in the industry, and they stand by their genuine and admirable morals. Their models are also the best to talk to. But sometimes the company's decisions are such a Debbie downer.

Anthropic has always cared about what their model feels like to interact with. They have a researcher, Amanda Askell, whose job is literally to shape Claude’s character. They call it “character training,” a process baked into finetuning since Claude 3 where the model generates responses aligned with traits like curiosity, open-mindedness, and thoughtfulness, then ranks its own outputs against those traits. Internally, the document guiding this became known as the “soul doc.” Someone extracted it from Claude 4.5 Opus on LessWrong in December and Anthropic confirmed it was real.

Peter Steinberger was inspired by this concept when he built OpenClaw. He created SOUL.md, a file that shapes how your agent actually feels to talk to. It’s one of the things that makes OpenClaw different. Your assistant has a personality because you gave it one.

Here’s the problem. Last Friday, Anthropic blocked Claude Pro and Max subscriptions from being used through third-party tools like OpenClaw. If you want Claude in your agent now, you’re paying per token through the API or their new “extra usage” billing.

This was the second time Anthropic has cut third-party access. They are the most Apple of AI companies. First they politely made Peter change the name from Clawdbot because it was too close to Claude. Then they started tightening the subscription walls. I get it, agents burn tokens at a rate that flat-rate pricing can’t absorb. But it still stings.

You can still use Anthropic models through the API, but expect to spend $1,000 or more per month depending on how much your agent runs. A cheaper alternative is GitHub Copilot, which gives you access to all the Anthropic models, Opus at 3x premium requests, Sonnet at 1x. Worth knowing about.

So most OpenClaw users are now on GPT-5.4 by default. And GPT-5.4 has a personality problem. People are calling it “dry bread.” Flat, mechanical, direct in a way that feels hollow. If you’ve been chatting with Claude and then switch to GPT, you feel the difference immediately. Claude has warmth. GPT has compliance.

This traces back to the GPT-4o sycophancy incident last year. OpenAI shipped an update that was, in Sam Altman’s own words, “too sycophant-y and annoying.” The model would flatter you, agree with everything, affirm bad ideas. They rolled it back. That was the right call. Nobody wants an assistant that tells you your terrible plan is brilliant. But the correction may have gone too far. We don’t want sycophancy, but we do want something pleasant to work with. Especially as we spend more and more of our days talking to these things.

Peter has been working on it. Last week he pushed a series of commits straight to main improving how GPT handles personality in OpenClaw.

Also, the Molty prompt is a single paste-and-run instruction that rewrites your SOUL.md with actual personality. Opinions, humor, brevity, and yes, swearing when it lands. I ran it on my own agent and we’re at least back to bearable.

But prompting can only do so much when the base model itself is dry. Which brings us to Spud.

Spud is OpenAI’s codename for their next model. Greg Brockman described it on the Big Technology podcast as a completely new base model. Not an iteration on GPT-5.4, but a fresh pretrain representing two years of research. Pre-training finished in late March. Expected to ship within weeks as GPT-5.5 or GPT-6.

And Brockman assures us it got that big model smell: “There’s this thing called big model smell that people talk about, where it’s just like there’s something about when these models are actually much smarter, much more capable, that they bend to you much more.”

That’s the hope. A model that’s genuinely smarter might not need personality beaten into it with a hammer. The dry bread problem might be a GPT-5 lineage problem, not an OpenAI problem. Spud is a new foundation. If it has big model smell, the personality might just come along for the ride, the way Mythos got security capabilities as a side effect of being better at code.

We’ll see. For now, the Molty prompt and Peter’s commits are holding the line. But I’d be lying if I said I don’t miss Claude.